RMark: data.table merge vs core merge

R-bloggers 2013-03-21

(This article was first published on XRGB, and kindly contributed to R-bloggers)

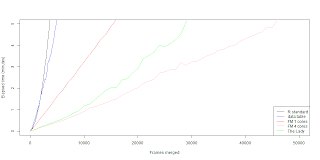

This is the third post concerning fast merging in R, first here and second here. This time we are going to look at how the merge function from data.table package works in our case, requested by Uwe Block. As a reminder the first post concerns doing a merging scheme using the lapply function. After that in the second post we looked how it translates to a parallel solution for even more speed. As a reminder the problem is the following: given N amount of files with a random amount of variables in each file. How do we merge these files into a complete data within reasonable amount of time? For example you can consider that the medical instruments records it's each run (patient) to a different file. With only the values measured by the instrument are recorded to the file. In practice I'm using Eve Online killmails. So far I have around 160k mails. Hence the need for a fast solution. Personally I haven't really used data.table, but it looks good enough. So I recommend checking it out. But in a sense nothing's really changed on my code part. I did a fast merging function based on data.table merge called the lady, but won't give the code because it isn't fast enough (spoiler alert). Also the data.table merge is a generic function. Meaning if you are using data.tables in a merge it automaticaly uses the data.table merge function. But enough it this prattle. Here's the results.  data.table merge is faster then the R core merge function if you are merging a for-loop solution. The problem still is that the data.table merging in a for-loop works in exponential time. Which doesn't translate well for big data. The single core fast merging scheme still beats the data.table merge in a for-loop. The biggest wonder is why when we combine the data.table merge with parallel fast merging scheme we get a slower outcome. Naturally I though we could squeeze few seconds out with low frame count, but seems that it's actually few minutes worse. So far I'm running dry in practical ideas and reasons why the lady seems so fat. Maybe it doesn't cleane up it's diet ? (memory management?) If you have some insight please comment.

data.table merge is faster then the R core merge function if you are merging a for-loop solution. The problem still is that the data.table merging in a for-loop works in exponential time. Which doesn't translate well for big data. The single core fast merging scheme still beats the data.table merge in a for-loop. The biggest wonder is why when we combine the data.table merge with parallel fast merging scheme we get a slower outcome. Naturally I though we could squeeze few seconds out with low frame count, but seems that it's actually few minutes worse. So far I'm running dry in practical ideas and reasons why the lady seems so fat. Maybe it doesn't cleane up it's diet ? (memory management?) If you have some insight please comment. To leave a comment for the author, please follow the link and comment on his blog: XRGB.

R-bloggers.com offers daily e-mail updates about R news and tutorials on topics such as: visualization (ggplot2, Boxplots, maps, animation), programming (RStudio, Sweave, LaTeX, SQL, Eclipse, git, hadoop, Web Scraping) statistics (regression, PCA, time series,ecdf, trading) and more...