Stirling’s Formula from Statistical Mechanics

Azimuth 2024-08-28

Physicists like to study all sorts of simplified situations, but here’s one I haven’t seen them discuss. I call it an ‘energy particle’. It’s an imaginary thing with no qualities except energy, which can be any number

I hate it when on Star Trek someone says “I’m detecting an energy field” — as if energy could exist without any specific form. That makes no sense! Yet here I am, talking about energy particles.

Earlier on the n-Café, I once outlined a simple proof of Stirling’s formula using Laplace’s method. When I started thinking about statistical mechanics, I got interested in an alternative proof using the Central Limit Theorem, mentioned in a comment by Mark Meckes. Now I want to dramatize that proof using energy particles.

The basic idea

Stirling’s formula says

where means that the ratio of the two quantities goes to

as

Some proofs start with the observation that

This says that is the Laplace transform of the function

Laplace transforms are important statistical mechanics. So what is this particular Laplace transform, and Stirling’s formula, telling us about statistical mechanics?

It turns out this Laplace transform shows up naturally when you consider a collection of energy particles!

Statistical mechanics says that at temperature the probability for an energy particle to have energy

follows an exponential distribution: at temperature

it’s proportional to

where

is Boltzmann’s constant. From this you can show the expected energy of this particle is

and the standard deviation of its energy is also

Next suppose you have energy particles at temperature

not interacting with each other. Each one acts as above and they’re all independent. As

you can use the Central Limit Theorem to show the probability distribution of their total energy approaches a Gaussian with mean

and standard deviation

But you can also compute the probability distribution exactly from first principles, and you get an explicit formula for it. Comparing this to the Gaussian, you get Stirling’s formula!

In particular, the that you see in a Gaussian gives the

in Stirling’s formula.

The math behind this argument is here, without any talk of physics:

• Aditya Ghosh, A probabilistic proof of Stirling’s formula.

The only problem is that it contains a bunch of rather dry calculations. If we use energy particles, these calculations have a physical meaning!

The downside to using energy particles is that you need to know some physics. So let me teach you that. If you know statistical mechanics well, you can probably skip this next section. If you don’t, it’s probably more important than anything else I’ll tell you today.

Classical statistical mechanics

When we combine classical mechanics with probability theory, we can use it to understand concepts like temperature and heat. This subject is called classical statistical mechanics. Here’s how I start explaining it to mathematicians.

A classical statistical mechanical system is a measure space equipped with a measurable function

We call the state space, call points in

the states of our system, and call

its Hamiltonian: this assigns a nonnegative number called the energy

to any state

When our system is in thermal equilibrium at temperature we can ask: what’s the probability of the system’s state

being in some subset of

? To answer this question we need a probability measure on

We’ve already got a measure

but Boltzmann told us the chance of a system being in a state of energy

is proportional to

where with

being temperature and

being a physical constant called Boltzmann’s constant. So we should multiply

by

But then we need to normalize the result to get a probability measure. We get this:

This is the so-called Gibbs measure. The normalization factor on bottom is called the partition function

and it winds up being very important. (I’ll assume the integral here converges, though it doesn’t always.)

In this setup we can figure out the probability distribution of energies that our system has at any temperature. I’ll just tell you the answer. For this it’s good to let

be the measure of the set of states with energy Very often

for some integrable function In other words,

where the expression at right is called a Radon–Nikodym derivative. Physicists call the density of states because if we integrate it over some interval

we get ‘the number of states’ in that energy range. That’s how they say it, anyway. What we actually get is the measure of the set

We can express the partition function in terms of and we get this:

So we say the partition function is the Laplace transform of the density of states.

We can also figure out the probability distribution of energies that our system has at any temperature, as promised. We get this function of

This should make intuitive sense: we take the density of states and multiply it by following Boltzmann’s idea that the probability for the system to be in a state of energy

decreases exponentially with

in this way. To get a probability distribution, we then normalize this.

An energy particle

Now let’s apply statistical mechanics to a very simple system that I haven’t seen physicists discuss.

An energy particle is a hypothetical thing that only has energy, whose energy can be any nonnegative real number. So it’s the classical statistical mechanical system whose measure space is and whose Hamiltonian is the identity function:

We can answer some questions about energy particles using the stuff I explained in the last section:

1) What’s the density of states of an energy particle? It’s just 1.

2) What’s the partition function of an energy particle? It’s

3) What’s the probability distribution of energies of an energy particle? It’s the exponential distribution

4) What’s the mean energy of an energy particle? It’s the mean of the above probability distribution, which is

5) What’s the variance of the energy of an energy particle? It’s

Nice! We’re in math heaven here, where everything is simple and beautiful. We completely understand a single energy particle.

But I really want to understand a finite collection of energy particles. Luckily, there’s a symmetric monoidal category of classical statistical mechanical systems, so we can just tensor a bunch of individual energy particles. Whoops, that sounds like category theory! We wouldn’t want that — physicists might get scared. Let me try again.

The next section is well-known stuff, but also much more important than anything about ‘energy particles’.

Combining classical statistical mechanical systems

There’s standard way to combine two classical statistical mechanical systems and get a new one. To do this, we take the product of their underlying measure spaces and add their Hamiltonians. More precisely, suppose our systems are and

Then we form the product space

give it the product measure

and define the Hamiltonian

by

We get a new system

When we combine systems in this way, a lot of nice things happen:

1) The density of states for the combined system is obtained by convolving the densities of states for the two separate systems.

2) The partition function of the combined system is the product of the partition functions of the two separate systems.

3) At any temperature, the probability distribution of energies of the combined system is obtained by convolving those of the two separate systems.

4) At any temperature, the mean energy of the combined system is the sum of the mean energies of the two separate systems.

5) At any temperature, the variance in energy of the combined system is the sum of the variances in energies of the two separate systems.

The last three of these follow from standard ideas on probability theory. For each value of the rules of statistical mechanics give this probability measure on the state space of the combined system:

But this is a product measure: it’s clearly the same as

Thus, in the language of probability theory, the energies of the two systems being combined are independent random variables. Whenever this happens, we convolve their probability distributions to get the probability distribution of their sum. The mean of their sum is the sum of their means. And the variance of their sum is the sum of their variances!

It’s also easy to see why the partition functions multiply. This just says

Finally, since the partition functions multiply, and the partition function is the Laplace transform of the density of states, the densities of states must convolve: the Laplace transform sends convolution to multiplication.

A system of  energy particles

energy particles

You can iterate the above arguments to understand what happens when you combine any number of classical statistical mechanical systems. For a system of energy particles the state space is

with Lebesgue measure as its measure. The energy is the sum of all their individual energies, so it’s

Let’s work out the density of states We could do this by convolution but I prefer to do it from scratch — it amounts to the same thing. The measure of the set of states with energy

is

This is just the Lebesgue measure of the simplex

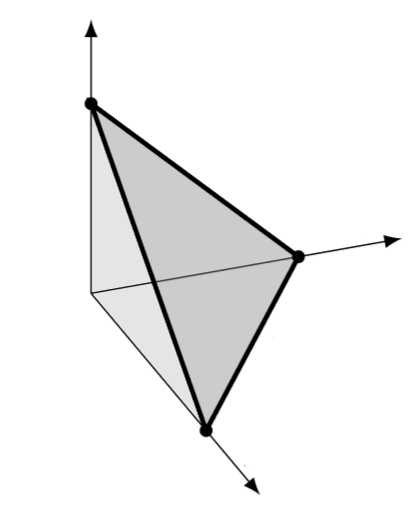

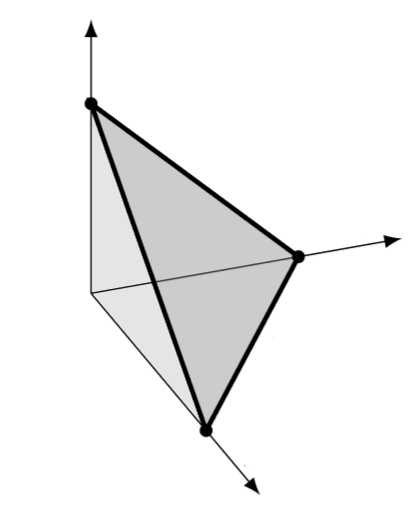

I hope you’re visualizing this simplex, for example when :

Its volume is well known to be times that of the hypercube

:

Differentiating this we get the density of states:

So, the partition function of a collection of $n$ energy particles is

In the last step I might have done the integral, or I might have used the fact that we already know the answer.

You may wonder why we’re getting these factors of when studying

energy particles. If If you think about it, you’ll see why. The density of states is the derivative of the volume of this

-simplex as a function of

:

But that’s the area of the -simplex shown in darker gray, which is

Now we know everything we want:

1) What’s the density of states of energy particles? It’s

2) What’s the partition function of energy particles? It’s

3) What’s the probability distribution of energies of an energy particle? It’s the so-called gamma distribution

4) What’s the mean total energy of energy particles? It’s

5) What’s the variance of the total energy of energy particles? It’s

Now that we’re adding independent and identically distributed random variables, you can tell we are getting close to the Central Limit Theorem, which says that a sum of a bunch of those approaches a Gaussian — at least when each one has finite mean and variance, as holds here.

Stirling’s formula

What is the probability distribution of energies of a system made of energy particles? We’ve used statistical mechanics to show that it’s

But the energy of each particle has mean and variance

and these energies are independent random variables. So the Central Limit Theorem says their sum is asymptotic to a Gaussian with mean

and variance

namely

We obtain a complicated-looking asymptotic formula:

But if we simplify this by taking we get

and if we then take we get

Fiddling around a bit we get Stirling’s formula:

Unfortunately this argument isn’t rigorous yet: I’m acting like the Central Limit Theorem implies pointwise convergence of probability distributions to a Gaussian, but it’s not that strong. So we need to work a bit harder. For the details I leave you to Aditya Ghosh’s article.

But my goal here was not really to dot every i and cross every t. It was to show that Stirling’s formula emerges naturally from applying standard ideas in statistical mechanics and the Central Limit Theorem to large collections of identical systems of a particularly simple sort.